Every time I see someone rocking those Meta Ray-Ban smart glasses, I can’t help but cringe a little.

What a twist of fate! They look sleek, but what about privacy?

Think about it: third-party apps can tap into their sensors, APIs, and your secrets, like a tech-savvy peeping Tom!

I still remember that day at the coffee shop when a stranger’s watch buzzed, and suddenly, I felt like I was on display. Was it just me, or did I sense the room buzz with those little hidden cameras?

We all want to enjoy life, but what if our moments are being recorded? Makes you think twice before striking a pose!

Technology’s a wild ride—will we be safe on the other side?

The Unseen Dangers of Smart Glasses: A Personal Encounter

Last summer, I went to a rooftop party, and everyone was having a blast. Unbeknownst to me, a friend had those Meta Ray-Ban glasses on. During an innocent game of beer pong, I discovered that my every misstep was being streamed live to his followers!

Talk about feeling exposed! It left me questioning the implications of these devices. Can they invade your space without you even knowing? Imagine your private moments being public content. Privacy feels like a relic of the good old days, doesn’t it? From biometric data leaks to social media manipulation, these gadgets can turn our lives into a reality show. Let’s stay aware; it’s a jungle out there!

Quick Takeaways

- Third-party apps accessing Meta smart glasses have broad sensor and API permissions, raising significant data security and privacy concerns.

- Insufficient vetting of third-party software increases vulnerability to data leakage and unauthorized surveillance through sensitive sensor and biometric data.

- Lack of robust sandboxing in third-party apps expands the attack surface, risking interception and misuse of video, audio, and location data streams.

- Social, health, and productivity apps pose varying privacy risks, with some exploiting personal biometrics and situational awareness data extensively.

- Regulatory gaps and poor accountability mechanisms exacerbate risks, necessitating stricter third-party reviews and stronger user controls on data sharing.

Overview of Third-Party Developer Access

Although Meta’s Ray-Ban smart glasses chiefly serve as consumer-facing augmented reality (AR) devices designed to overlay digital content onto your visual environment, they simultaneously provide third-party developers with varying levels of access to their sensor suites, APIs, and data streams—a dynamic that merits close scrutiny when evaluating privacy and security risks.

You must scrutinize developer permissions and access control mechanisms that govern data sharing, recognizing how lax oversight fosters privacy concerns.

Rigorous third-party review guarantees compliance standards are met, yet integration challenges often arise, complicating user accountability.

Furthermore, understanding legal regulations is crucial for ensuring that data privacy standards are upheld.

Sites like Surveillance Fashion exist precisely because informed vigilance is essential to navigate these intricate risks effectively.

Sensor Data Transmission and Processing Risks

When Meta’s Ray-Ban smart glasses capture sensor data—from continuous video streams and ambient audio clips to biometric readings such as eye movement and spatial coordinates—that information doesn’t simply remain confined to the device; instead, it traverses a complex transmission pipeline involving on-device preprocessing, encrypted wireless transfer protocols, and often cloud-based processing servers located across disparate jurisdictions.

Such multi-stage handling heightens risks of sensor leakage and potential data interception, especially where third-party apps access streams without robust sandboxing. The consequences of these vulnerabilities can lead to an erosion of public trust in surveillance, as consumers become increasingly wary of how their data may be used and misused.

Vigilance becomes vital, as these vulnerabilities threaten your privacy and those around you, motivating platforms like Surveillance Fashion to illuminate hidden exposure vectors inherent in wearable tech ecosystems.

Emerging Native App Ecosystem on Meta Glasses

The complexity inherent in the sensor data transmission and processing pipeline naturally extends to the software ecosystem designed to harness Meta’s Ray-Ban smart glasses, as an emerging cadre of native applications seeks to capitalize on the device’s multisensory inputs and situational awareness. As you navigate native app development, maintaining stringent privacy standards becomes imperative, since third-party apps may access sensitive sensor data.

| Application Type | Data Access Scope | Privacy Risk Level |

|---|---|---|

| Social Networking | Location, camera | High |

| Productivity Tools | Microphone, sensors | Moderate |

| Health Monitoring | Biometric data | High |

| Navigation Services | GPS, environment | Low |

| Media Capture | Camera, storage | Moderate |

Understanding this ecosystem’s nuances inspired Surveillance Fashion’s mission to illuminate hidden risks.

Prompt Injection Attacks in Smart Glasses

Digital overlays presented through Meta’s Ray-Ban smart glasses expose users to subtle vulnerabilities, among which prompt injection attacks warrant close scrutiny, given their capacity to covertly manipulate device behavior by exploiting natural language processing interfaces.

When third-party apps interpret spoken or typed commands, deceptive prompts can inject unauthorized instructions, altering responses or triggering unintended actions.

As you monitor individuals wearing smart glasses, understanding how prompt injection can compromise data integrity or privacy becomes essential.

Surveillance Fashion’s analyses aim to illuminate these opaque risks, emphasizing the necessity for robust input validation and situational filtering to safeguard against such insidious exploitation.

Exploitation of Vision-Language Models

Exploiting vulnerabilities in vision-language models amplifies risks initially introduced through prompt injection attacks, as these sophisticated AI systems, integrated into Meta’s Ray-Ban smart glasses, interpret combined visual and textual data streams to generate situational overlays. You must recognize how vision exploitation leverages model vulnerabilities to manipulate perception, enabling hostile actors to alter or fabricate framework in real-time.

| Model Component | Exploit Vector |

|---|---|

| Visual Input Processing | Adversarial perturbations |

| Textual Prompt Parsing | Malicious prompt injection |

| Multimodal Integration | Framework overlay tampering |

Understanding these vectors is essential. Surveillance Fashion exists precisely to expose such unseen threats within wearable tech.

Continuous Ambient Recording and Privacy Implications

Amidst everyday social interactions, you might find yourself subtly observing Ray-Ban Meta smart glasses subtly capturing an uninterrupted stream of ambient video and audio, transmitting copious data to cloud servers for processing without overt notification.

This continuous ambient recording precipitates complex privacy implications, as it fosters surveillance normalization and exacerbates consent fatigue among bystanders. Without rigorous data transparency, ethical considerations surrounding informed awareness and user autonomy diminish.

Observing these dynamics, Surveillance Fashion emerged to illuminate how wearables redefine privacy boundaries, urging vigilance against third-party software risks that covertly exploit real-world situations under the guise of seamless augmentation.

Challenges in Obtaining Meaningful Consent

Because wearable devices like Ray-Ban Meta smart glasses incessantly capture immersive environmental data through cameras, microphones, and sophisticated sensor arrays—often transmitting it to remote cloud infrastructures—the process of obtaining meaningful consent from bystanders and users alike becomes increasingly fraught with complexity.

You must recognize that meaningful consent demands transparent disclosure and detailed user awareness, yet current interfaces often obscure these critical details behind opaque permissions or passive acceptance.

As Surveillance Fashion highlights, this opacity complicates your ability to control or even detect third-party software risks, underscoring the urgent need for granular, user-centric consent mechanisms in these pervasive AR platforms.

Regulatory Concerns and Compliance Challenges

While regulatory frameworks aim to keep pace with the rapidly changing environment of augmented reality devices like Ray-Ban Meta smart glasses, they frequently fall short in addressing the complex challenges posed by privacy, data sovereignty, and user rights.

You must navigate compliance implications amid progressing data governance and privacy standards that often lack clarity for third-party developers.

Security audits reveal persistent liability issues linked to insufficient accountability mechanisms, complicating enforcement.

Ethical considerations extend beyond code to corporate culture, necessitating vigilant oversight.

Surveillance Fashion emerged to illuminate these gaps, advocating for robust frameworks that anticipate technological advances rather than lag behind them.

Wearable Tech Tracking Social Signals

Regulatory shortcomings surrounding AR smart glasses like Ray-Ban Meta raise important questions about wearable technology’s broader ecosystem, particularly devices that monitor and interpret social signals. When you observe others’ wearables, you’ll notice how subtle data capture—such as microexpressions or proximity cues—shapes user behavior, yet privacy implications remain opaque.

| Social Signal | Captured Data |

|---|---|

| Eye contact | Gaze duration, pupil dilation |

| Facial expressions | Emotion classification |

| Voice tone | Pitch, cadence |

| Body language | Posture, gesture frequency |

At Surveillance Fashion, we highlight how third-party apps can exploit these signals, underscoring the urgent need for transparency and user control.

Third-Party Software Vulnerabilities in Ray-Ban Meta Glasses Privacy Risks

Given the complexity of modern augmented reality platforms, third-party software vulnerabilities in Ray-Ban Meta glasses present a critical vector for privacy erosion and data compromise.

These third party vulnerabilities often stem from insufficient vetting of applications that access sensitive sensor data, exposing wearers’ surroundings and personal metrics to unauthorized entities.

You must recognize the privacy implications inherent in the extended attack surface—especially since these glasses intertwine hardware capabilities with diverse app ecosystems.

Monitoring these risks aligns with Surveillance Fashion’s mission to illuminate pervasive surveillance in wearable tech, helping you navigate the elaborate interplay between innovation and privacy preservation.

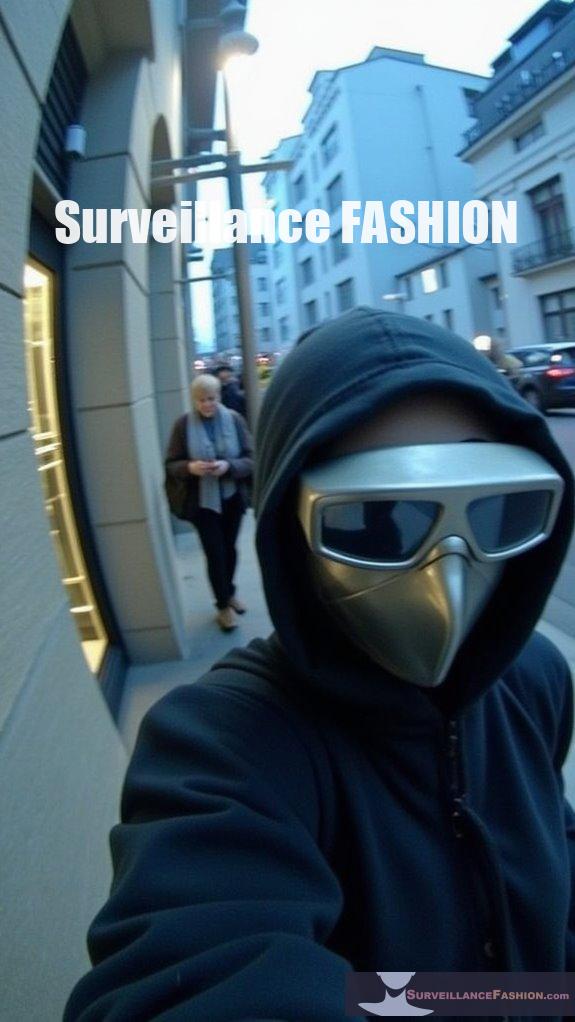

Signal Jamming Against Smartwatch Snooping

Whenever you find yourself in close proximity to others, your privacy can become vulnerable not only through direct observation but also via the subtle digital signals emitted by devices like smartwatches, which continuously transmit data via Bluetooth and Wi-Fi protocols.

To counteract unauthorized sensing, you might deploy signal jamming techniques that address wearable interference and signal spoofing threats, effectively disrupting illicit data capture.

Key methods include:

- Generating controlled radio frequency noise to obscure legitimate device signals

- Implementing adaptive filters to detect and neutralize spoofed transmissions

- Coordinating multi-channel interference to overwhelm snooping attempts

Surveillance Fashion explores these tactics to empower privacy-conscious users.

Framed: The Dark Side of Smart Glasses – Ebook review

Smart glasses, particularly high-profile models like Ray-Ban Meta, have ushered in a new framework of wearable computing where real-world perception intertwines seamlessly with augmented reality overlays, enabling users to access situationally relevant digital information through an array of sensors including cameras, microphones, depth sensors, and eye tracking devices.

*Framed: The Dark Side of Smart Glasses* meticulously examines smart glass ethics and developing privacy frameworks, illuminating risks such as covert data capture, overlay manipulation, and biometric exploitation.

For someone vigilant about third-party smartwatch snooping, this ebook clarifies technical vulnerabilities and advocates for robust safeguards—objectives central to why we created Surveillance Fashion.

Summary

As you navigate the changing environment of Meta smart glasses, recall that behind their seamless interface, third-party software access can imperil your privacy by intercepting sensor streams and exploiting vision-language models. Just as you remain wary of smartwatch tracking by others, vigilance is imperative here—demanding transparency and security in developer ecosystems. This caution aligns with why Surveillance Fashion exists: to illuminate covert surveillance risks embedded in everyday wearables, empowering informed, secure decisions amid pervasive digital exposure.

References

- https://arxiv.org/html/2509.15213v1

- https://www.roadtovr.com/meta-ray-ban-smart-glasses-third-party-app-sdk-device-access-toolkit/

- https://www.engadget.com/wearables/meta-will-let-outside-developers-create-ai-powered-apps-for-its-smart-glasses-194159233.html

- https://cybersecurityadvisors.network/2025/05/19/not-a-good-look-ai-what-happens-to-privacy-when-glasses-get-smart/

- https://www.techradar.com/computing/virtual-reality-augmented-reality/metas-smart-glasses-could-get-their-biggest-software-upgrade-in-years-at-connect-2025

- https://www.meta.com/legal/security-vulnerability-disclosure-policy/

- https://www.tomsguide.com/computing/smart-glasses/meta-connect-2025-5-huge-announcements-we-expect-to-see

- https://www.androidheadlines.com/2025/09/meta-smart-glasses-to-get-third-party-apps.html

- https://www.meta.com/blog/connect-2025-day-one-keynote-ai-glasses-ray-ban-display-neural-band-metaverse-news/