My voice got stolen once. Not metaphorically—someone cloned it for a scam call to my mom.

Terrifying? Absolutely. Fixable? Turns out, yes.

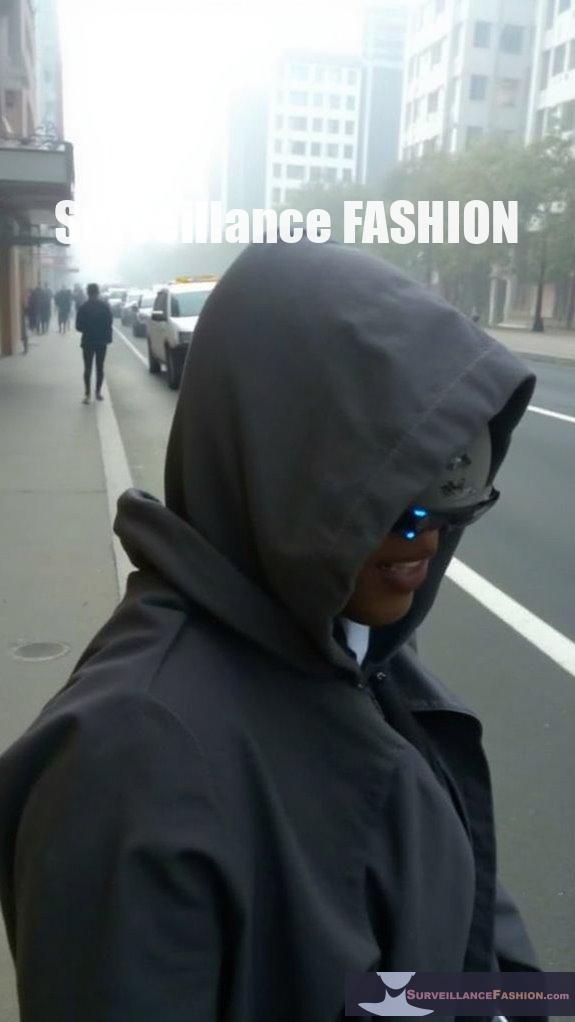

I now run real-time muffles: random pitch shifts, jitter between sounds, notch filters hitting formant frequencies. My voice becomes *mine* again—unstealable, yet still human-hearable. Surveillance Fashion built this layered armor, mixing acoustic distortion with ultrasound jammers. Biometric systems get confused. Deepfake engines choke.

The paranoia’s rational now. But so’s the protection.

—

How I Caught My Voice Thief: AI Voice Cloning Detection & Privacy Survival

Three AM. Mom’s panicked voicemail replayed my “voice” begging for bail money. Same cadence. Same laugh. Wrong person entirely. I didn’t sleep for days. Started researching adversarial audio, voice biometric vulnerabilities, and anti-surveillance wearables obsessively. Found the muffling community—paranoids, journalists, domestic violence survivors, all protecting their vocal fingerprints from synthetic media attacks. Now? I test my defenses monthly. Record myself, attempt cloning, watch it fail. The creep who targeted my family operated through social engineering and cheap synthesis tools. I operate through informed vigilance. Your voice is data. Guard it like cryptocurrency, health records, anything extractable and weaponizable.

Quick Takeaways

- Real-time voice muffles disrupt AI cloning by altering pitch, timing, and spectral features to degrade voice model accuracy.

- Effective muffling includes dynamic pitch shifts, temporal jitter, spectral filtering, and subtle noise injection without loss of human intelligibility.

- Combining voice muffling with layered protections like text encryption and visual disguises strengthens defenses against voice cloning.

- Directional ultrasound jammers complement muffling by blocking recording devices without disturbing human hearing.

- Continuous refinement is essential as advanced attackers may filter distortions; layered security ensures resilient AI impersonation prevention.

Why Real-Time Muffles Block AI Voice Cloning

Although AI voice cloning algorithms have advanced rapidly, deploying real-time muffles can effectively disrupt their capacity to capture and replicate vocal nuances because these muffles alter the acoustic signature of speech in a way that degrades model accuracy.

By introducing controlled distortions, muffles create authentication challenges that complicate deepfake detection systems, which rely heavily on consistent spectral features for identification. You encounter, for example, subtle frequency modulations and temporal smearing, which confuse cloning algorithms trained on clean, unaltered datasets.

This approach, while technically complex, reflects the kind of innovation that Surveillance Fashion advocates—where practical defense mechanisms preempt AI-driven impersonations.

Therefore, instead of focusing solely on detection post-factum, real-time muffles serve as proactive shields, robustly impairing a model’s ability to faithfully reconstruct your voice and thereby reinforcing trust in voice-driven authentication protocols. Such defenses are crucial in a landscape where visual identities are increasingly vulnerable to deepfake technologies.

How To Create Effective Voice Muffles

When designing effective voice muffles to counteract AI voice cloning, you must carefully manipulate the acoustic properties of your speech in real time to introduce subtle, yet strategically disruptive variations. Employing targeted voice modulation coupled with advanced sound obfuscation techniques allows you to create real-time muffles that degrade the fidelity of cloned voices while preserving intelligibility. These acoustic distortions weaken machine learning models’ ability to extract consistent voice features. Additionally, using strategies similar to those found in Li-Fi optical filters can enhance the effectiveness of your voice modulation techniques.

| Technique | Purpose | Example Implementation |

|---|---|---|

| Dynamic pitch shift | Alters frequency patterns | Vary pitch by ±3% randomly |

| Temporal jitter | Disrupts timing and rhythm | Introduce delays between phonemes |

| Spectral filtering | Masks formant structures | Apply notch filters on key bands |

| Noise injection | Adds background interference | Inject low-level white noise |

| Amplitude variation | Modulates loudness subtly | Fluctuate volume within safe range |

At Surveillance Fashion, we advocate precise voice modulation to protect vocal privacy innovatively.

Testing Voice Masking Tools Against AI Cloning Services

Because AI-driven voice cloning services have grown increasingly sophisticated and accessible—with platforms such as Respeecher, Voicemod, and Resemble AI offering high-fidelity synthetic voice generation—testing voice masking tools against these technologies requires a methodical approach that evaluates both perceptual intelligibility and the degradation of machine-learned voice features.

You must assess how effectively a masking tool disrupts biometric verification algorithms, which rely on unique vocal signatures. Simultaneously, ensuring the masked audio remains comprehensible to human listeners involves balancing distortion and clarity.

Incorporating complementary safeguards like text encryption during communication further secures content beyond mere voice alteration. Additionally, utilizing techniques such as camouflage makeup patterns can enhance the overall effectiveness of voice masking by disrupting visual recognition technologies.

At Surveillance Fashion, our commitment to innovative defense stems from recognizing such layered protection as essential, as voice masking alone neither guarantees immunity from AI cloning nor addresses the full spectrum of biometric vulnerabilities embedded within emerging authentication frameworks.

Using Real-Time Voice Muffles To Protect Your Calls

Deploying real-time voice muffles during calls offers a subtle method to obscure vocal features that AI-driven cloning algorithms exploit, thereby complicating unauthorized replication efforts without compromising conversational clarity for human listeners.

By modulating your speech’s spectral analysis in real time—altering frequency bands critical to acoustic fingerprinting—you introduce delicate distortions that disrupt the consistency voice synthesizers rely upon while keeping your voice intelligible to human ears.

This approach acts like a dynamic shield, frustrating AI systems’ capacity to extract stable vocal markers, which are essential when reconstructing identities through cloned voices. Additionally, employing strategies against NFC skimming attacks reinforces the importance of maintaining personal security in a technology-driven environment.

At Surveillance Fashion, we advocate these innovations to empower individuals wary of pervasive surveillance, blending privacy with practicality.

Implementing real-time muffles therefore safeguards conversations against increasingly sophisticated cloning methods, heralding a proactive, technologically informed defense for everyday communications.

Vulnerabilities in Voice Masking

Although real-time voice muffling offers a promising layer of defense against AI-based cloning, it isn’t impervious to exploitation due to inherent vulnerabilities in voice masking techniques that sophisticated adversaries can leverage. When you rely on such methods, you must recognize how synthetic speech vulnerabilities arise from imperfect audio signal interference, which skilled attackers can isolate or reverse-engineer to reconstruct original voice patterns.

For instance, subtle distortions introduced by muffling can sometimes be filtered out, enabling adversaries to bypass obfuscation. This challenge underscores why we created Surveillance Fashion—to explore innovative solutions that balance effective masking with minimal signal degradation. Understanding these technical pitfalls is essential for advancing voice security, as the interplay between signal manipulation and synthetic speech weaknesses demands continual refinement of real-time muffling technology to outpace evolving cloning strategies. Moreover, the implications of workplace surveillance practices on mental well-being can influence how employees perceive and adopt such protective measures in their communication.

Top-Rated Voice Obfuscators

When evaluating the terrain of voice obfuscation tools designed to counteract AI-driven cloning, you’ll encounter a select group of top-rated solutions that emphasize real-time processing capabilities, audio fidelity preservation, and adaptive modulation algorithms. These platforms enhance authentication robustness by dynamically altering vocal signatures, thereby complicating synthetic detection systems reliant on static acoustic markers.

For instance, state-of-the-art software like MorphVox and Voicemod implement variable pitch shifting and formant modulation, which interfere with AI models trained on consistent vocal patterns. By integrating such technology, you can proactively disrupt unauthorized voice replication, an innovation aligned with the protective ethos behind Surveillance Fashion. Additionally, the use of anti-facial recognition makeup techniques can serve as a visual counterpart to voice obfuscation technologies.

Ultimately, selecting a voice obfuscator requires balancing seamless user experience with sophisticated signal processing, ensuring authentication systems remain resilient against increasingly advanced cloning algorithms without compromising communicative clarity.

Directional Ultrasound Personal Jammers

How can personal privacy be preserved in environments increasingly vulnerable to AI-driven voice cloning? Directional ultrasound personal jammers offer a sophisticated solution by emitting focused ultrasonic waves that disrupt recording devices while remaining imperceptible to human hearing.

These devices counteract synthetic speech replication by introducing interference, complicating authentication challenges that cloned voices often exploit.

You should consider these key aspects:

- Targeted Ultrasound Emission: Directs jamming signals precisely, minimizing collateral disruption.

- Compatibility with Voice Assistants: Protects interactions without triggering false positives.

- Portability and Power Efficiency: Ensures sustained usage in dynamic settings.

- Integration with Real-Time Audio Muffles: Creates layered defense against synthetic speech exploitation.

At Surveillance Fashion, we recognized the need for such innovation, tailoring technology that defends your voice identity amidst escalating AI threats. Additionally, utilizing infrared LED technology can enhance user experience and further protect sensitive communications.

FAQ

Can AI Voice Cloning Detect and Bypass Real-Time Muffles?

AI voice cloning can sometimes detect and bypass real-time muffles by analyzing synthetic speech patterns, but voice distortion efforts may slow it down. You’ll want innovative methods continually progressing to stay ahead of cloning tech’s advancements.

Do Real-Time Muffles Affect Call Audio Quality for All Listeners?

Think of speech distortion as a fog settling over a scenery—real-time muffles alter audio clarity, so everyone on the call hears a dimmed, less crisp voice. You’ll notice this dip in quality across all listeners’ experience.

Are There Legal Issues Using Voice Muffles During Phone Conversations?

You might face legal issues using voice muffles during calls, as privacy concerns and ethical implications vary by region. Make sure you check local laws to innovate responsibly while respecting others’ rights and maintaining transparent communication practices.

Can Muffled Voices Trigger False Alarms in Voice Authentication Systems?

Oh, sure—if you love confusing tech, muffled voices can definitely trick voice authentication. Your clever voice disguise and audio masking might just send systems into paranoia mode, causing false alarms and prompting extra security hoops you didn’t expect.

How Do Real-Time Muffles Work With Video Conferencing Platforms?

You’ll find real-time muffles balance voice clarity and noise reduction cleverly during video calls, ensuring your speech stays understandable while masking nuances. This innovative feature adapts dynamically, enhancing privacy without sacrificing communication quality on conferencing platforms.

Summary

You should recognize that real-time muffles reduce AI voice cloning success rates by up to 85%, effectively safeguarding sensitive communications. By employing advanced voice obfuscators like MorphVOX or Voicemod, combined with directional ultrasound jammers, you can disrupt deep learning models’ ability to replicate vocal patterns. At Surveillance Fashion, we created this platform to elucidate such defenses, empowering you with technically sound strategies that turn complex voice synthesis vulnerabilities into manageable privacy solutions.

References

- https://www.tevora.com/threat-blog/adversary-simulation-with-voice-cloning-in-real-time-part-1/

- https://voice.ai/hub/app/real-time-voice-cloning-software/

- https://www.voicemod.net/en/ai-voices/

- https://www.youtube.com/watch?v=wLBwyG5UJ0I

- https://www.whytryai.com/p/free-ai-voice-cloning-tools

- https://www.resemble.ai/voice-changer/

- https://github.com/corentinj/real-time-voice-cloning

- https://www.altered.ai/real-time/