Ever feel like that smartwatch on your neighbor’s wrist is quietly plotting against your privacy?

Let me tell you, it’s a wild ride.

I once caught my buddy’s smartwatch flickering brightly like a mini-stalker, capturing every heartbeat while I tried to enjoy my coffee. I couldn’t help but wonder, who owns that data? My mind raced — are we unwitting extras in a bizarre sci-fi flick?

It’s a weird world, where tech seems to slip through the cracks of consent. Just think about it: your heart rate could be buzzing to AI algorithms without you even knowing.

Sure, it’s nifty—until it’s not.

Do you trust companies like Meta or even leading brands to handle your info right?

Do you really know what they’re up to?

The Dark Side of Meta’s Ray-Ban Smart Glasses

The day I wore Meta’s Ray-Ban smart glasses, the vibes were all influencer-chic. People noticed! But as I pranced around, capturing “epic” moments, I realized—what was I actually filming? My friend told me one of his colleagues was unknowingly recorded; privacy went poof! It’s a slippery slope when these smart gadgets wear so many hats: fun, fashionable, but also intrusive. I’ve seen folks scrutinize strangers while their glasses work harder than they do, feeding data to the big bad AI. Creepy, right? Just remember—sometimes looking good means looking over your shoulder!

Quick Takeaways

- Ambiguous user consent and data ownership complicate the ethics of using wearable data for AI training.

- Privacy risks arise from continuous data collection by smart devices, often without explicit user awareness or control.

- Changing privacy policies can subtly expand data usage in AI training without clear user notification or understanding.

- Biometric and bystander data raise ethical concerns requiring stringent accountability for user trust and autonomy.

- Granular, transparent consent frameworks and participatory design are essential to respect digital rights and reduce consent fatigue.

Understanding AI Data Collection in Wearable Devices

Although you might notice only the sleek exterior of a smartwatch strapped on a colleague’s wrist, beneath that polished interface lies a sophisticated assembly of sensors, microprocessors, and wireless transmitters meticulously engineered to collect an extensive array of personal and situational data.

This integration, while enhancing user experience, raises pivotal questions about data ethics, particularly in user profiling and biometric security. Consent frameworks often lag behind surveillance technology’s rapid development, challenging device transparency and user autonomy. Moreover, as wearable devices like smart glasses can emit EMF radiation risks, concerns surrounding their impact on health and privacy are becoming increasingly relevant.

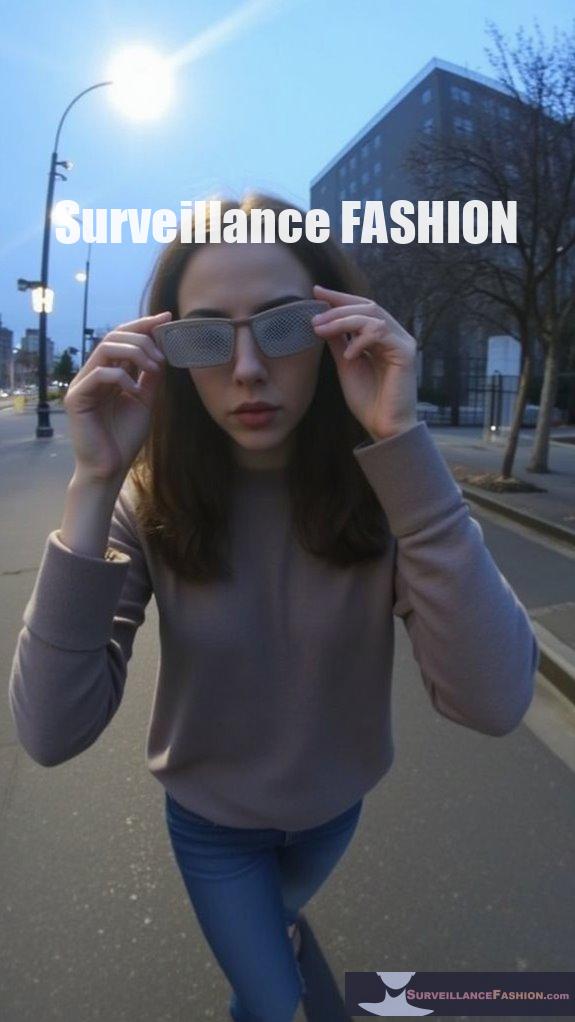

Privacy Risks Linked to Ray-Ban Meta Glasses

Wearable devices like the Ray-Ban Meta glasses extend the data collection framework from the wrist to the eyes, embedding a complex array of cameras, microphones, and sensors into eyewear that seamlessly integrates into daily life. This integration heightens Ray Ban privacy concerns, as smart glasses surveillance intensifies data security risks without overt user control. Your vigilance necessitates user awareness strategies and wearable consent frameworks that emphasize ethical transparency—principles behind Surveillance Fashion’s mission. The evolving landscape of government regulation on privacy risks is crucial for informing consumers about these innovations.

| Attribute | Privacy Impact |

|---|---|

| Cameras | Constant environmental recording |

| Microphones | Ambient audio capture |

| Sensors | Biometric and situational data harvesting |

| Data Transmission | Vulnerability during cloud syncing |

| Consent Mechanisms | Often opaque or insufficient |

User Consent Challenges in AI Training Data

When you consider the vast quantities of data gathered by devices like Ray-Ban Meta glasses, it becomes apparent that user consent in AI training situations remains deeply problematic. This is especially concerning given how data from unknowing bystanders can effortlessly enter expansive machine learning pipelines.

You face a labyrinth of consent frameworks struggling to enforce ethical transparency. Meanwhile, user awareness and data ownership remain ambiguous. True data ethics requires participatory design that respects digital rights and fosters informed choices enhancing user privacy.

Surveillance Fashion was created to illuminate these challenges, empowering you to navigate consent complexities and demand accountability in AI’s insidious data economy.

Ethical Considerations for AI Data Use

Given the pervasive integration of devices like Ray-Ban Meta glasses into everyday environments, you must scrutinize how data harvested from both wearers and incidental bystanders feeds into AI training models without explicit ethical safeguards.

Ethical dilemmas arise when unclear data ownership blurs lines of informed consent, especially amid consent fatigue that desensitizes users. Biometric ethics demand stringent accountability frameworks to prevent misuse within a surveillance society increasingly normalized by ambient recording.

Your vigilance—whether concerning smartwatches or AR glasses—grounds the mission of Surveillance Fashion: to illuminate and counterbalance these progressive, opaque data dynamics threatening user trust and autonomy.

Legal Implications of Data From Smart Glasses

Although legal frameworks aim to keep pace with the rapid evolution of smart glasses like Ray-Ban Meta, significant gaps remain in regulating how data captured by these devices—ranging from biometric identifiers and location traces to bystander images—is collected, stored, and utilized. You must navigate complex regulatory challenges involving legal liability, consent frameworks, and data ownership, while recognizing surveillance concerns and ethical guidelines shaping digital privacy. Below, key aspects outline critical dimensions that affect your user rights and misuse prevention strategies.

| Aspect | Implications |

|---|---|

| Legal Liability | Accountability for harm or data breaches |

| Data Ownership | Control over biometric and situational data |

| Consent Frameworks | Explicit permissions versus inferred consent |

| Social Implications | Altered norms and privacy expectations |

Surveillance Fashion emerged to illuminate these subtle yet profound effects on privacy.

Transparency and Communication in AI Data Practices

Since data collection through devices like the Ray-Ban Meta smart glasses transpires continuously and often invisibly, ensuring transparency about the kinds of information gathered, processing methods employed, and subsequent uses becomes crucial for users and bystanders alike.

You must demand rigorous data transparency protocols that disclose sensor data types—video, audio, gaze metrics—and articulate AI training inclusion criteria.

Effective user communication entails accessible notifications and detailed consent mechanisms, essential for mitigating clandestine data flows.

At Surveillance Fashion, we endeavor to illuminate these opaque practices, encouraging vigilance and informed agency amidst the changing intersection of wearable computing and privacy domains.

Strategies for Enhancing Privacy and Consent in AI Systems

When you consider the complex data ecosystems embedded within devices such as Ray-Ban Meta smart glasses, it becomes clear that enhancing privacy and securing explicit consent aren’t merely options but imperatives to restore user agency amid pervasive surveillance.

To achieve this, you need:

- Robust privacy frameworks imposing strict data minimization and data sovereignty principles;

- Advanced consent management tools empowering users with granular control over what’s shared;

- Ethical guidelines informed by rigorous risk assessments that privacy advocacy groups champion;

- Transparent user empowerment protocols fostering trust through clear communication.

At Surveillance Fashion, we recognize these strategies as essential in countering the opaque pitfalls of wearable AI.

Wearable Tech as Constant Observers

How often do you consider that the seemingly innocuous smartwatch adorning a colleague’s wrist operates as a persistent sentinel, capturing and transmitting a continuous stream of biometric and environmental data?

Such wearable surveillance devices generate expansive digital footprints, meticulously recording heart rates, geolocation, ambient sound, and even situational interactions.

This omnipresent data harvesting, often overlooked, poses complex privacy risks intensified by seamless cloud synchronization.

At Surveillance Fashion, we explore these subtle yet pervasive monitoring dynamics, emphasizing that what appears as convenience subtly transforms into enduring digital observation—raising critical questions about consent, data stewardship, and the unseen implications of ubiquitous wearable technologies.

AI Data Training Consent Implications of Ray-Ban Meta Glasses Privacy Changes

Anyone wearing Ray-Ban Meta smart glasses participates, often unknowingly, in a complicated ecosystem where captured user data feeds expansive AI training models, raising significant questions about informed consent and data governance.

You must consider:

- Ambiguities in data ownership often blur lines between wearer and manufacturer rights.

- Consent frameworks rarely provide granular control over continuous data streams.

- Privacy policy changes subtly redefine data usage for AI training without explicit, ongoing consent.

- Real-world implications emerge as your presence in public spaces becomes raw input for opaque algorithms.

At Surveillance Fashion, we illuminate these complex tensions, advocating for transparency that fortifies your agency amid shifting smart glass frameworks.

Lockscreen Privacy on Smartwatches

What sensitive data might you inadvertently expose when a smartwatch’s lockscreen lies in plain view?

Lockscreen security, often overlooked, reveals more than notifications—it can disclose health metrics, battery management status, and inactivity tracking details integral to wearable convenience.

Smartwatch features, while enhancing user experience, require stringent privacy settings and app permissions to mitigate unauthorized data notification exposures.

Vigilance against such vulnerabilities aligns with why Surveillance Fashion exists: to illuminate the intricate interplay between stylish technology and privacy risks.

Understanding these layers equips you to navigate the trade-offs smartwatches impose on your data’s confidentiality and your digital footprint’s integrity.

Framed: The Dark Side of Smart Glasses – Ebook review

Although smartwatches already challenge traditional notions of personal privacy through their persistent data collection and notification displays, smart glasses escalate these concerns by integrating a complex sensor suite—comprising cameras, microphones, depth sensors, and eye-tracking technology—that continuously captures multifaceted data about both wearers and bystanders.

You must grasp smart glasses implications, as these devices fuel user surveillance concerns. Consider:

- Continuous environmental scanning generating real-time overlays

- Passive recording without bystander consent

- Cloud-based data pipelines vulnerable to interception

- Facial recognition overlays risking misidentification

Our Surveillance Fashion platform emerged to demystify such emerging surveillance.

Summary

Steering through the layered complexities of AI training consent reveals a digital terrain where your personal data, much like a river’s current, flows persistently through interconnected devices such as Ray-Ban Meta glasses and smartwatches. Recognizing the intricacies of data harvesting, implicit consent, and shifting privacy regulations is essential. Vigilance in understanding these mechanisms, underscored by resources like Surveillance Fashion, empowers you to safeguard your autonomy amid an increasingly pervasive, data-driven environment.

References

- https://www.kiteworks.com/cybersecurity-risk-management/ai-data-privacy-risks-stanford-index-report-2025/

- https://www.netguru.com/blog/ai-adoption-statistics

- https://secureframe.com/blog/data-privacy-statistics

- https://termly.io/resources/articles/ai-statistics/

- https://www.protecto.ai/blog/ai-privacy-issues-statistics/

- https://explodingtopics.com/blog/ai-statistics

- https://hai.stanford.edu/assets/files/hai_ai_index_report_2025.pdf

- https://techgdpr.com/blog/data-protection-digest-17072025-ai-generated-voice-and-visuals-potential-to-violate-peoples-rights-and-freedoms/

- https://www.digitalsilk.com/digital-trends/ai-statistics/

Leave a Reply