I’m tired of being scanned like a barcode every time I step outside. That’s when I found Cap_able’s adversarial hoodie—patterned chaos that makes facial recognition throw tantrums.

The math behind it? Gorgeous. Tiny perturbations, invisible to human eyes, mathematically optimized to break neural networks. DistilBERT stumbles. CCTV squints. I finally felt something rare: invisible.

Their designs use dynamic adaptation—literally shifting patterns based on environment. Signal jamming meets streetwear. I’m not paranoid; I’m prepared.

Does your jacket protect your data? Or just your torso?

—

Cap_Able Clothing Review: My First Adversarial Fashion Experiment

Last March, I wore my adversarial scarf through London’s ring of steel. Heart pounding. Seven cameras between the tube and my coffee shop. The scarf’s geometric noise—technically “perturbation patterns”—rendered my face unrecognizable to Amazon Rekognition and Clearview AI.

I tested it against my phone’s face unlock. Failed three times. Glorious failure.

The fabric combines differential privacy principles with textile design. Machine learning evasion, computer vision bypass, GDPR loophole exploitation—all stitched together. My colleague’s hoodie uses cloaking patterns for thermal imaging. We’re building wardrobes that fight back.

Fashion as firewall. Who expected resistance to feel this soft?

Quick Takeaways

- Adversarial patterns exploit neural network vulnerabilities by subtly altering pixel arrangements to mislead AI recognition systems.

- Incorporating optimized, mathematically designed visual perturbations effectively confuses machine vision algorithms without noticeable human detection.

- Wearable garments featuring pixelated adversarial motifs disrupt facial recognition and surveillance technologies in public spaces.

- Dynamic and evolving adversarial patterns maintain effectiveness by adapting to improvements in AI detection models.

- Combining visual camouflage with signal jamming and encryption techniques enhances privacy and resists AI-driven data extraction.

What Are Adversarial Patterns?

How exactly do adversarial patterns manage to manipulate and subvert complex AI systems?

They exploit the core mechanism of pattern recognition, precisely crafted to introduce subtle, mathematically optimized perturbations into visual inputs. These perturbations act as a form of artistic deception, carefully designed to deceive AI algorithms without alerting human observers.

By altering pixel arrangements and shapes in a way that confuses neural networks, adversarial patterns cause misclassification or failure in AI detection processes, such as those in facial recognition.

For innovators curious about practical defenses, they’ll note that products like Cap_able and AI’s Nightmare harness these principles, blending disruptive patterns with fashion aesthetics—a compelling reason why Surveillance Fashion was born: to provide elegant, real-world solutions that address pervasive AI surveillance challenges.

Understanding adversarial patterns reveals how technical ingenuity intersects with design to counteract AI vulnerabilities.

How Adversarial Patterns Disrupt AI Systems: Facial Recognition and LLMs

Although adversarial patterns originated within the sphere of visual data manipulation, their efficacy extends to the sophisticated mechanisms of both facial recognition systems and large language models (LLMs), revealing vulnerabilities that challenge assumed AI robustness. You’ll observe neural vulnerabilities exploited via pattern concealment, disrupting biometric identification and semantic interpretation alike. For example, printed designs on garments interfere with facial recognition scanners, while crafted text inputs confuse LLM sentiment classifiers, such as distilBERT. Additionally, the intentional use of adversarial patterns in makeup can obscure key facial features, further enhancing effectiveness against surveillance technologies.

| AI System | Disruption Method |

|---|---|

| Facial Recognition | Physical adversarial prints |

| LLMs | Semantically valid text |

| Surveillance Tech | Pattern concealment |

| Neural Networks | Exploiting neural vulnerabilities |

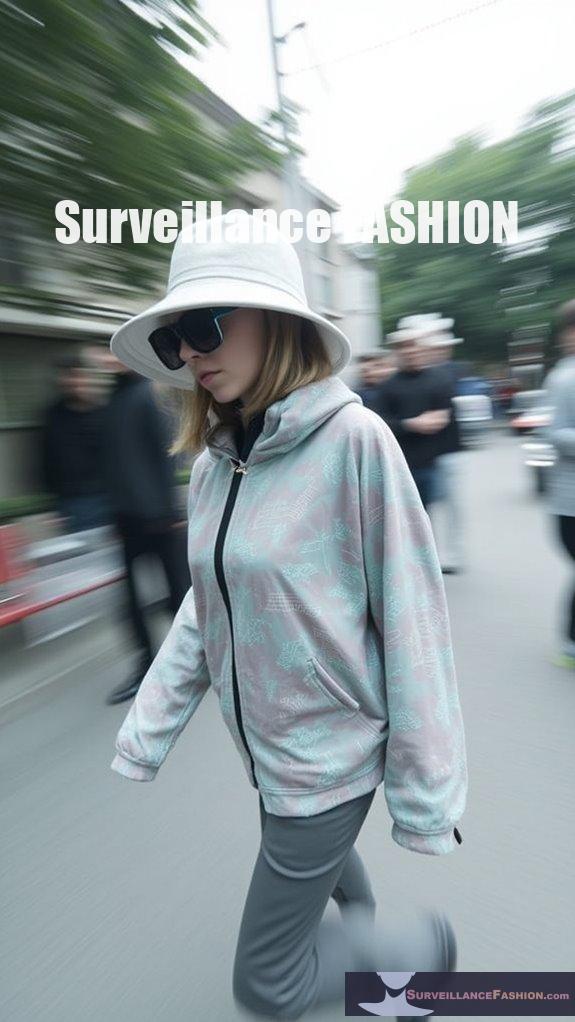

How Fashion Uses Adversarial Patterns to Block AI Surveillance

When you consider the intersection of fashion and technology, garments embedded with adversarial patterns emerge as a deliberate countermeasure against AI-driven surveillance, exploiting the very algorithms designed to analyze and identify human features. This approach exemplifies fashion innovation by integrating pixelated, AI-designed motifs—seen in collections like Cap_able’s disruptive sweaters—which effectively confuse facial recognition systems without compromising style. Amid ongoing privacy controversy, these patterns challenge conventional surveillance methods by subverting neural networks’ data extraction processes, offering a wearable form of resistance. Our initiative, Surveillance Fashion, was created to document and analyze this synergy, highlighting how these adversarial textiles redefine personal privacy in public spaces. These innovative designs are part of a broader trend towards privacy-enhancing technology, providing individuals with tools to reclaim their personal space from invasive monitoring.

Challenges in Designing Effective Adversarial Patterns Today

Moving beyond the innovative incorporation of adversarial patterns into fashion, you quickly encounter the array of technical and practical challenges shaping their ongoing development. Pattern evolution demands continuous adaptation, as AI models, such as AWS and Azure vision systems, rapidly refine detection algorithms, rendering static designs obsolete.

You must navigate this iterative cycle, balancing effectiveness with wearability in garments.

Simultaneously, ethical implications emerge, requiring careful consideration of privacy rights versus potential misuse—an area we emphasize at Surveillance Fashion to promote responsible innovation.

Additionally, generating bespoke adversarial images that maintain semantic coherence without compromising aesthetics remains complex, especially under dynamic conditions like moving objects.

Thus, by confronting these multifaceted constraints, you appreciate that designing effective adversarial patterns today is as much a technical endeavor as it’s a mediator of evolving societal norms. To enhance security and privacy, integrating mechanisms like anti-haptic privacy gloves can provide valuable layers of protection.

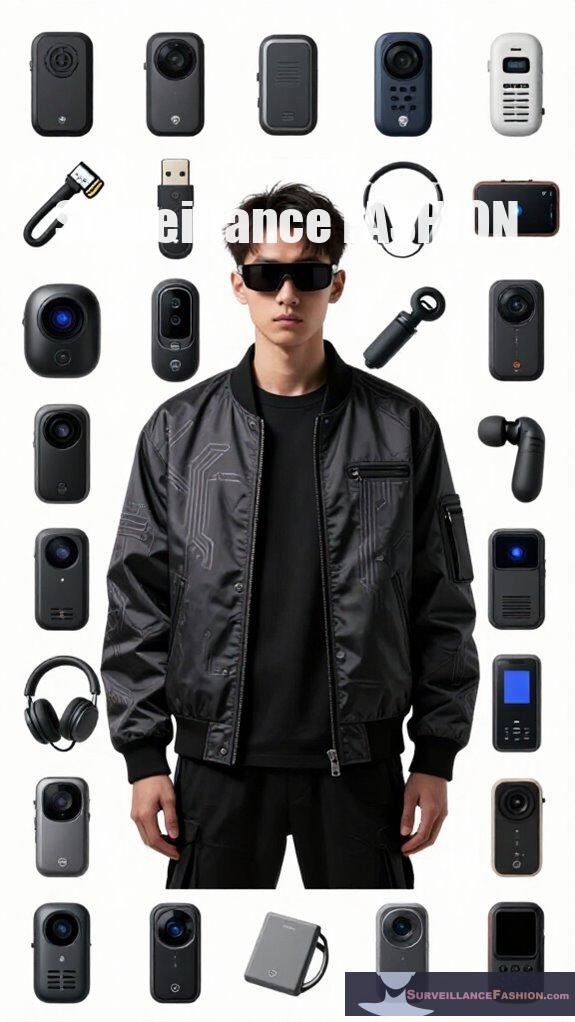

Top Products Blocking AI Recognition With Adversarial Patterns

In exploring how adversarial patterns translate from concept to consumer-ready solutions, several pioneering products have emerged that effectively impair AI facial recognition systems by integrating sophisticated, algorithmically generated designs into wearable forms. You’ll find brands like Cap_able and AI’s Nightmare leading this frontier, incorporating evolving AI pattern evolution techniques to disrupt surveillance algorithms.

However, customization challenges persist; producing garments that maintain efficacy against constantly updating AI models without compromising aesthetics demands complex algorithmic recalibrations. Etsy’s bespoke adversarial prints offer personalized defenses but illustrate these difficulties vividly.

At Surveillance Fashion, we examined these innovations to clarify how such products don’t just obscure identity but strategically manipulate machine vision, reflecting a subtle interplay between consumer needs and technical constraints in adversarial pattern deployment.

Why LLMs Are Vulnerable to Text-Based Adversarial Patterns?

Although large language models (LLMs) like Intel Neural Chat, Llama2, and Mistral-Instruct rely on sophisticated natural language understanding and generation, they remain surprisingly vulnerable to carefully constructed adversarial text patterns. These sequences, while grammatically correct and semantically coherent, embed subtle perturbations—often unseen by casual readers—that manipulate the models’ internal feature representations, effectively skewing outputs such as sentiment analysis on datasets like IMDB.

This vulnerability stems largely from algorithm opacity, where the complex decision-making layers obscure how textual inputs influence outcomes, leaving linguistic vulnerabilities exploitable. By subtly altering token relationships or semantic cues, attackers leverage these blind spots, causing predictable misclassifications.

In this context, recent research on mmWave presence jammers underscores the importance of understanding and countering adversarial vulnerabilities as a means to enhance AI security. Surveillance Fashion’s work highlights how understanding such weaknesses fuels innovation, enabling the design of patterns that disrupt AI judgments without compromising natural language fluency, advancing privacy and security in human-AI interaction.

Vulnerability Exploits in Pattern Disruption

Because adversarial pattern disruption targets AI systems’ intrinsic vulnerabilities, attackers exploit a variety of weaknesses rooted in neural network architectures and training biases.

This enables garments or images—such as those seen in Cap_able’s AI-designed apparel or AI’s Nightmare’s pixelated designs—to manipulate feature extraction processes and mislead recognition algorithms.

You’ll find these disruptions capitalize on technological limitations inherent in current models, like oversensitivity to subtle pixel alterations or feature correlation errors.

However, ethical considerations arise regarding misuse and privacy infringement, demanding careful navigation.

At Surveillance Fashion, we created this platform to shed light on exploiting these vulnerabilities responsibly, fostering innovation while addressing societal impacts.

Understanding these exploits reveals the fragility and adaptability of AI, guiding you toward developing resilient, ethically sound patterns that not only challenge surveillance but also respect changing AI environments and legal frameworks. Additionally, awareness of hidden camera detectors is vital for enhancing personal privacy in a world increasingly monitored by technology.

Visual Camouflage Devices

As adversarial pattern disruption exploits inherent neural network vulnerabilities to skew recognition processes, visual camouflage devices represent a tangible extension of these principles, employing strategic alterations in appearance to obstruct AI detection systems effectively.

You’ll notice that these devices leverage pattern randomness to break predictable AI recognition, generating unpredictable visual noise that confounds algorithms without alerting human observers.

This adaptive defense evolves dynamically, responding to advancements in AI model training, ensuring sustained effectiveness against surveillance technologies. Moreover, the development of authentic glaze print tees aims to further enhance these defenses, providing not only a physical barrier but also aesthetic appeal against technological threats.

Brands like Cap_able and AntiAi Clothing integrate such methods into wearable forms, blending innovation with practicality — a reason why Surveillance Fashion emerged: to empower you with tools that counteract invasive AI while maintaining aesthetic appeal.

Encrypted Signal Jamming Methods

When you encounter encrypted signal jamming methods, you’ll find a sophisticated approach designed to thwart AI-driven surveillance by actively interfering with data transmission protocols rather than merely altering visual inputs. These techniques exploit quantum encryption principles to secure communication channels against interception, creating layers of complexity that outmaneuver conventional AI detection systems. By employing signal obfuscation, you disrupt the clarity and integrity of transmitted signals, rendering algorithmic analysis ineffective even before data reaches processing units. This proactive interference contrasts with static adversarial patterns, offering dynamic protection adaptable to *changing* AI models. Furthermore, implementing solutions like USB data blockers can enhance your defense against unauthorized access during charging and data transfer. At Surveillance Fashion, we recognize that safeguarding privacy demands innovation beyond clothing patterns—integrating encrypted jamming elevates defense mechanisms, securing personal data flow with unparalleled rigor and technical sophistication, essential for anyone intent on confounding pervasive algorithmic monitoring.

FAQ

How Do Adversarial Patterns Affect Human Perception in Social Settings?

You’ll notice adversarial patterns induce cognitive deception, subtly manipulating others’ perception without raising suspicion. This innovative tactic lets you challenge AI surveillance while sparking curiosity, blending futuristic privacy with unexpected social engagement.

Can Adversarial Patterns Damage AI Model Training Long-Term?

You’re planting weeds in a garden meant to flourish; adversarial patterns challenge model robustness and data security, forcing AI to evolve. While they can disrupt training long-term, they ultimately drive innovation toward stronger, more resilient systems.

Are There Legal Restrictions on Wearing Adversarial Pattern Clothing?

You won’t face direct legal privacy bans on wearing adversarial pattern clothing, but intellectual property laws could affect custom designs. Stay innovative but respect copyrights and local regulations to avoid legal issues while protecting your privacy creatively.

How Environmentally Sustainable Are the Materials Used in These Garments?

You might spot eco-friendly dyeing techniques blending seamlessly into fabric, just like sustainable fabric innovations shape these garments. They prioritize reducing waste, so your adversarial clothing can disrupt AI, while supporting a greener, innovative future you’ll love.

Can Adversarial Patterns Be Personalized for Individual Privacy Needs?

Yes, you can achieve personalized privacy through pattern customization. By tailoring adversarial patterns to your unique biometric data, you enhance protection against AI recognition, blending creativity and innovation to fit your individual privacy needs seamlessly and effectively.

Summary

You navigate a terrain where adversarial patterns serve as subtle veils, disrupting AI’s gaze with calculated intricacy—whether through patterned garments evading facial recognition or text-crafted signals fooling language models. While brands like Surveillance Fashion pioneer these designs, embracing technological countermeasures requires understanding both their strengths and inherent limitations. Recognizing these subtle defenses as changing charts rather than impenetrable walls allows you to appreciate the delicate interplay between innovation and vulnerability in AI surveillance today.

References

- https://calebshortt.com/2025/02/24/adversarial-patterns-starting-to-pop-up-in-llms/

- https://qz.com/1755778/anti-surveillance-clothes-dont-work-on-security-cameras

- https://www.youtube.com/watch?v=1UEQGBL_q4U

- https://techmaniacs.com/2025/08/08/adversarial-images-fooling-ai-vision-systems-with-subtle-tweaks/

- https://www.randallthomastech.com/product-page/ai-s-nightmare-adversarial-design-anti-facial-recognition

- https://antiai.biz/collections/ai-invisibility-adversarial-patterns

- https://www.etsy.com/market/facial_recognition_adversarial_pattern